Applications are open for the ML Alignment & Theory Scholars (MATS) Summer 2025 Program, running Jun 16-Aug 22, 2025. First-stage applications are due Apr 18!

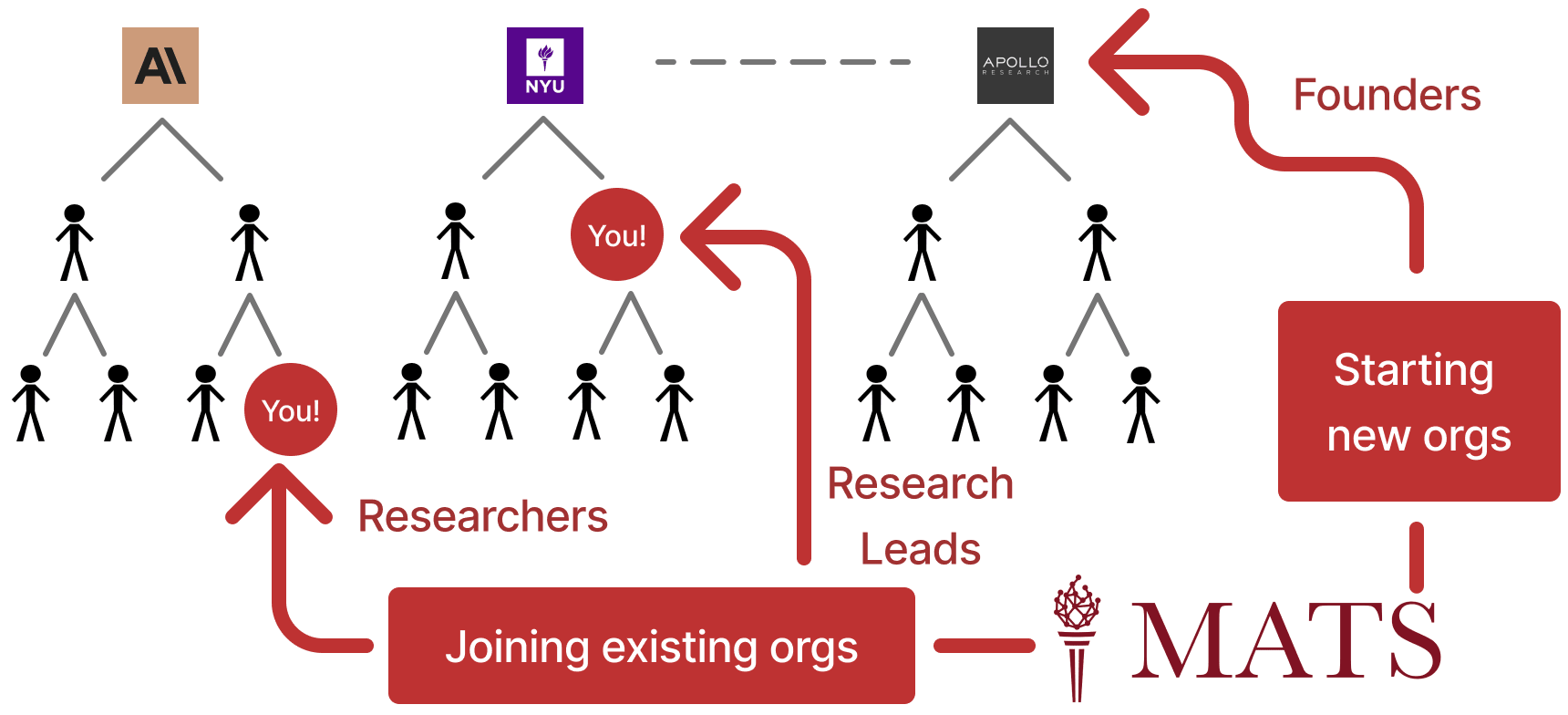

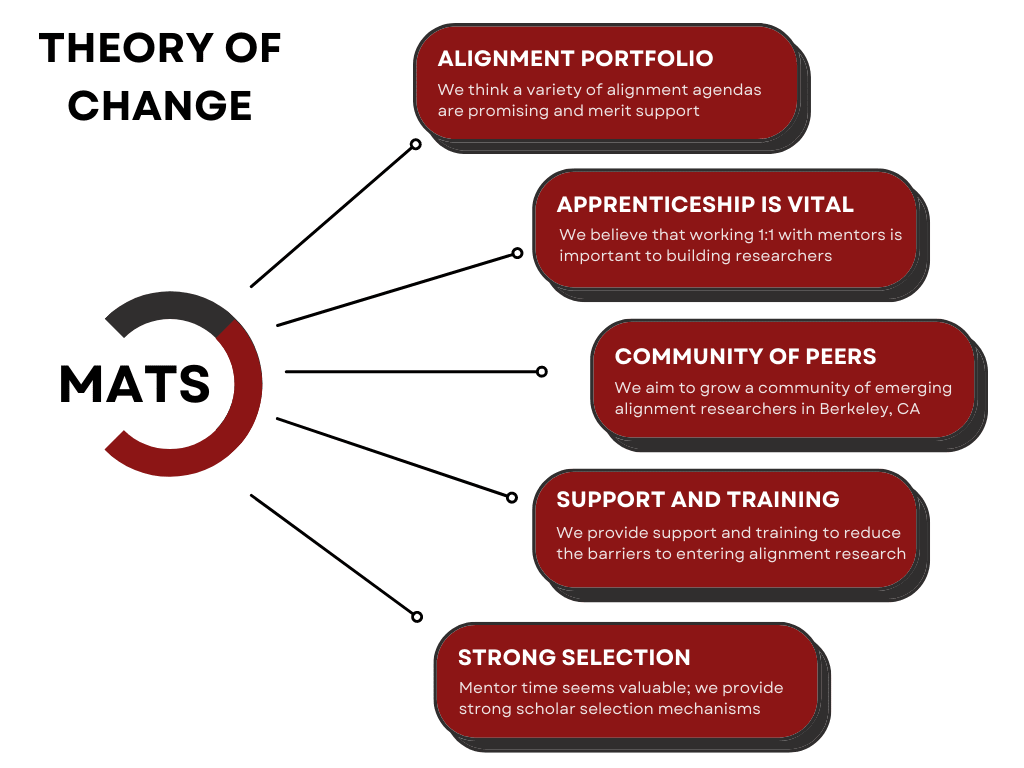

MATS is a twice-yearly, 10-week AI safety research fellowship program operating in Berkeley, California, with an optional 6-12 month extension program for select participants. Scholars are supported with a research stipend, shared office space, seminar program, support staff, accommodation, travel reimbursement, and computing resources. Our mentors come from a variety of organizations, including Anthropic, Google DeepMind, OpenAI, Redwood Research, GovAI, UK AI Security Institute, RAND TASP, UC Berkeley CHAI, Apollo Research, AI Futures Project, and more! Our alumni have been hired by top AI safety teams (e.g., at Anthropic, GDM, UK AISI, METR, Redwood, Apollo), founded research groups (e.g., Apollo, Timaeus, CAIP, Leap Labs), and maintain a dedicated support network for new researchers.

If you know anyone who you think would be interested in [...]

---

Outline:

(01:41) Program details

(02:54) Applications (now!)

(03:12) Research phase (Jun 16-Aug 22)

(04:14) Research milestones

(04:44) Community at MATS

(05:41) Extension phase

(06:07) Post-MATS

(07:44) Who should apply?

(08:45) Applying from outside the US

(09:27) How to apply

---

First published:

March 20th, 2025

Source:

https://www.lesswrong.com/posts/9hMYFatQ7XMEzrEi4/apply-to-mats-8-0

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.