Audio note: this article contains 212 uses of latex notation, so the narration may be difficult to follow. There's a link to the original text in the episode description.

This is a cross-post - as some plots are meant to be viewed larger than LW will render them (and on a dark background) it is recommended this post be read via the original site.

Thanks to Zach Furman for discussion and ideas and to Daniel Murfet, Dmitry Vaintrob, and Jesse Hoogland for feedback on a draft of this post.

Introduction

Neural Networks are machines not unlike gardens. When you train a model, you are growing a garden of circuits. Circuits can be simple or complex, useful or useless - all properties that inform the development and behaviour of our models.

Simple circuits are simple because they are made up of a small number [...]

---

Outline:

(00:35) Introduction

(03:15) Prior Work

(04:42) Our Contribution

(05:38) What is Singular Learning Theory?

(06:50) A Parameters Journey Through Function Space

(08:44) Quantifying Degeneracy with Volume Scaling

(09:14) Basin Volume and Its Scaling

(09:53) Regular Versus Singular Landscapes

(10:55) Why Volume Scaling Matters

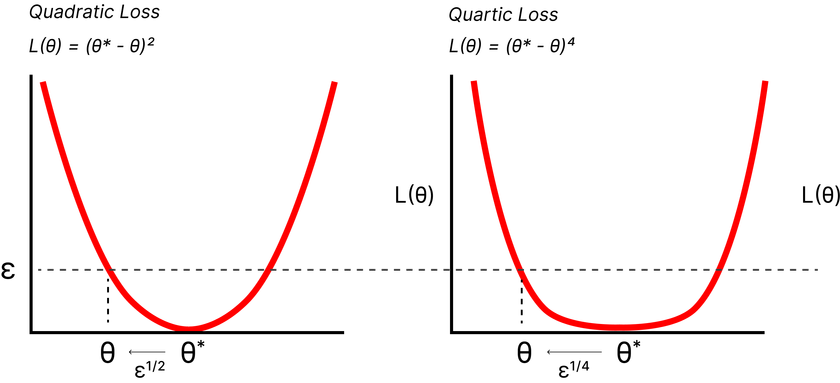

(13:04) A One-Dimensional Intuition

(13:34) Quadratic Loss:

(13:50) Quartic Loss:

(14:05) General Case:

(14:35) Calculating the Local Learning Coefficient, or LLC

(17:00) SGLD

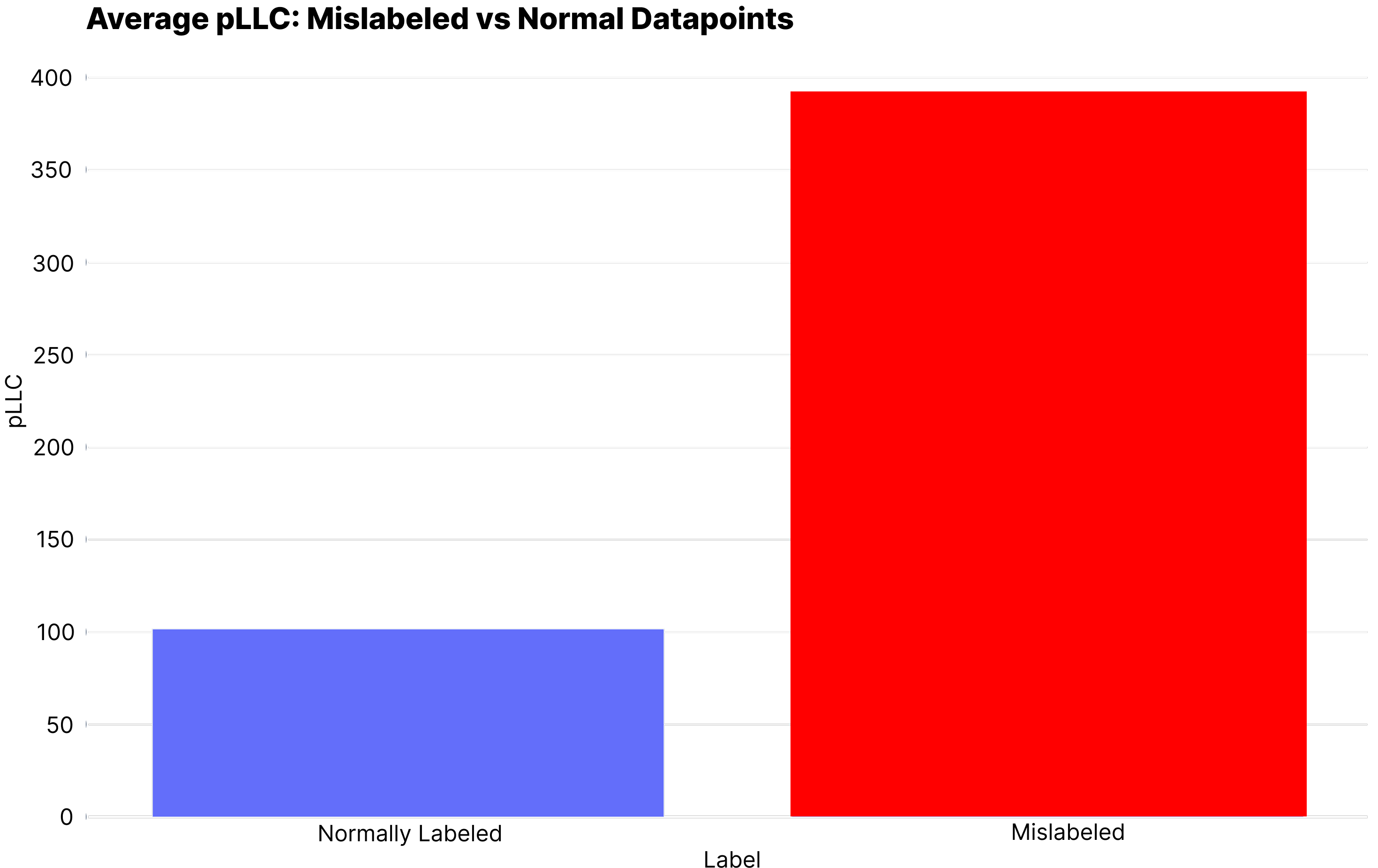

(18:12) The Per-Sample LLC, or (p)LLC

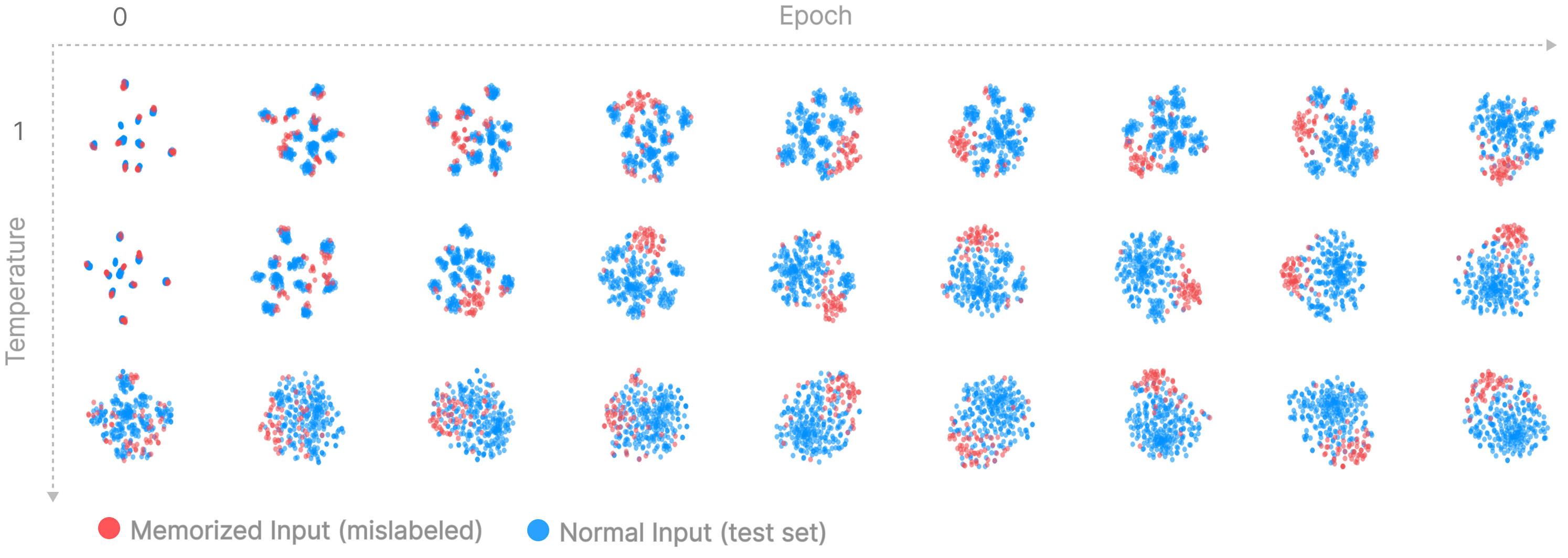

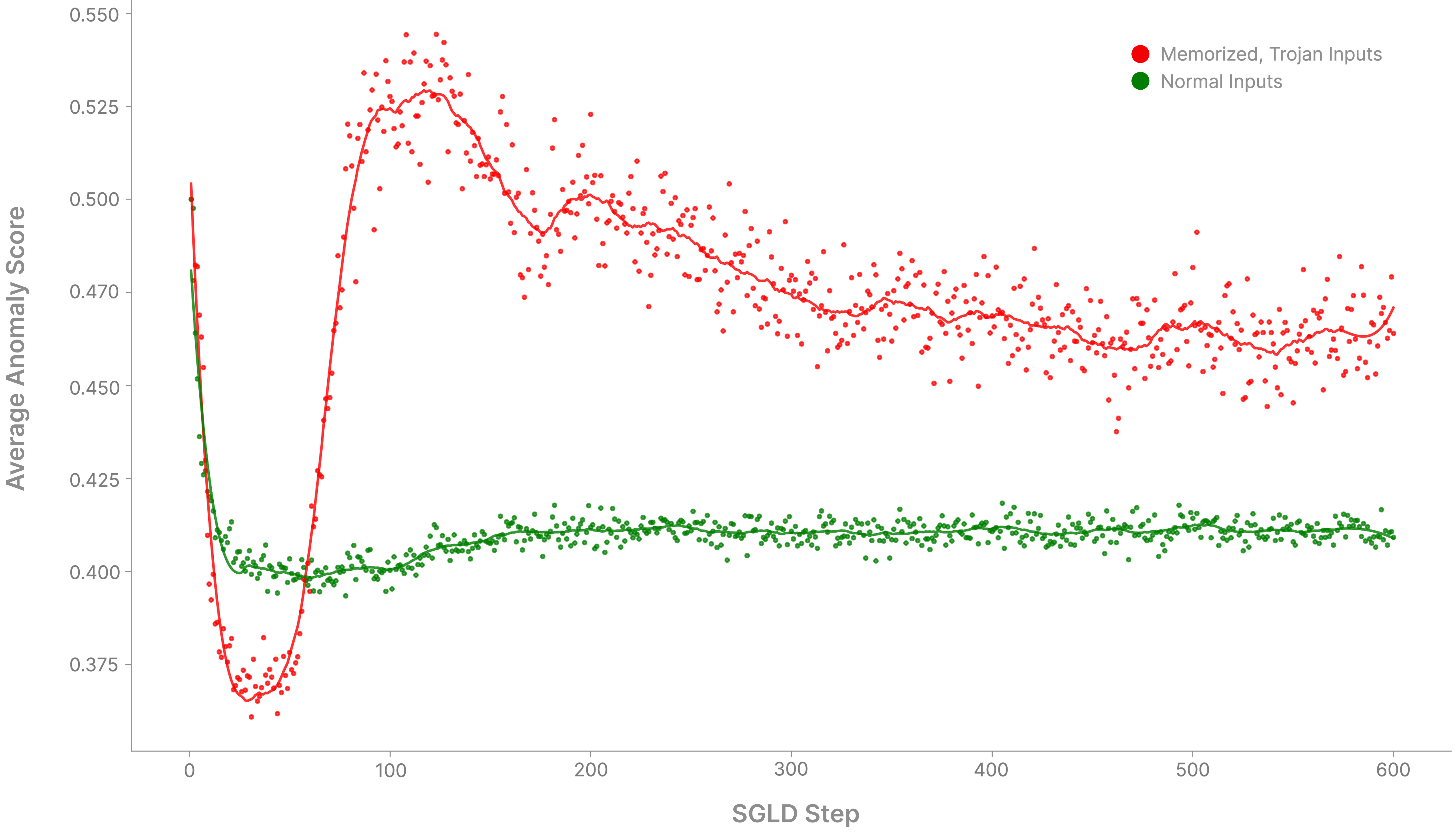

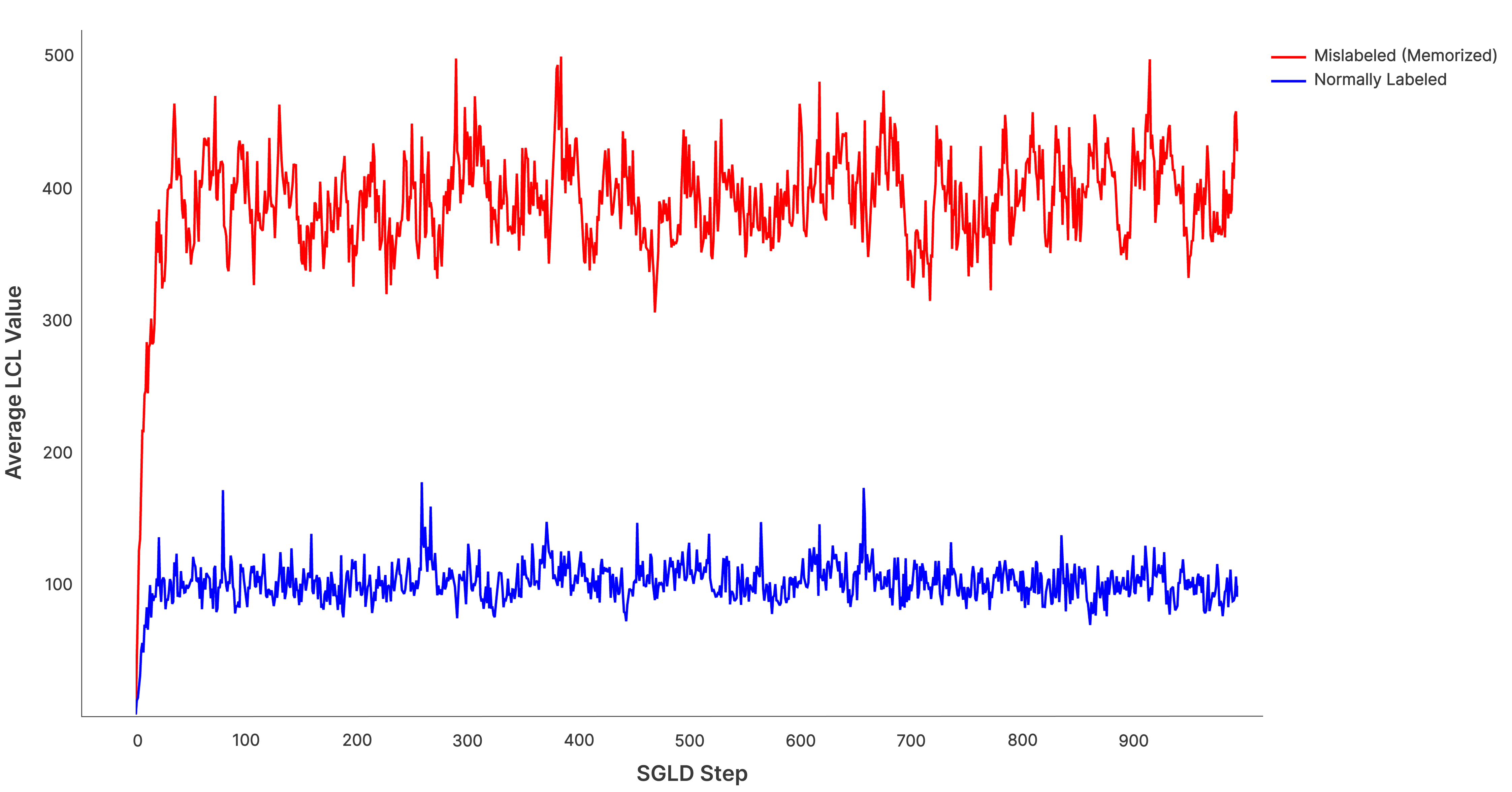

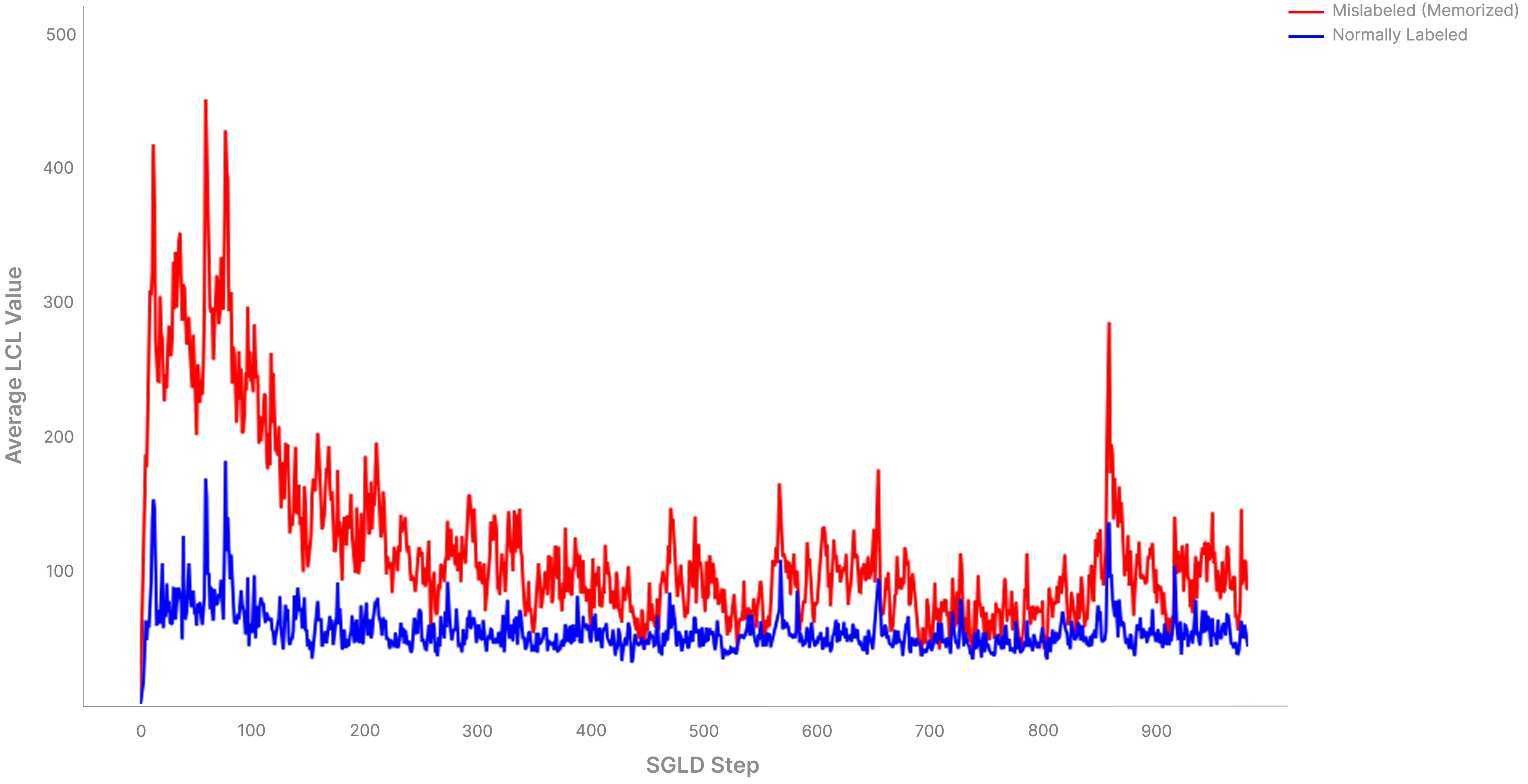

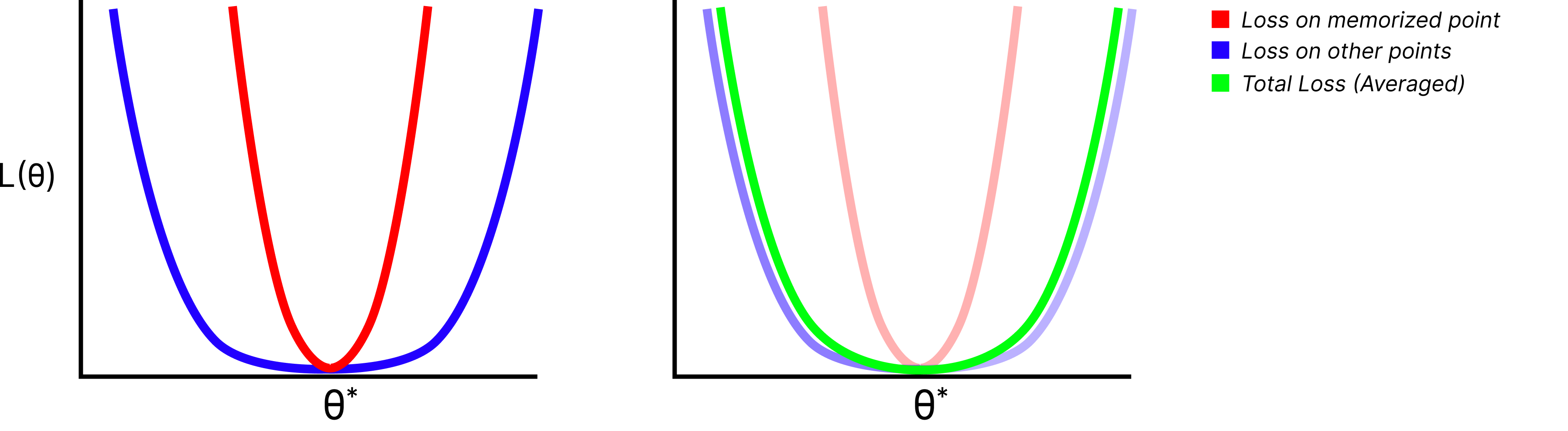

(19:48) A Synthetic Memorization Task

(22:10) pLLC in the Wild

(23:54) Alternative Methods

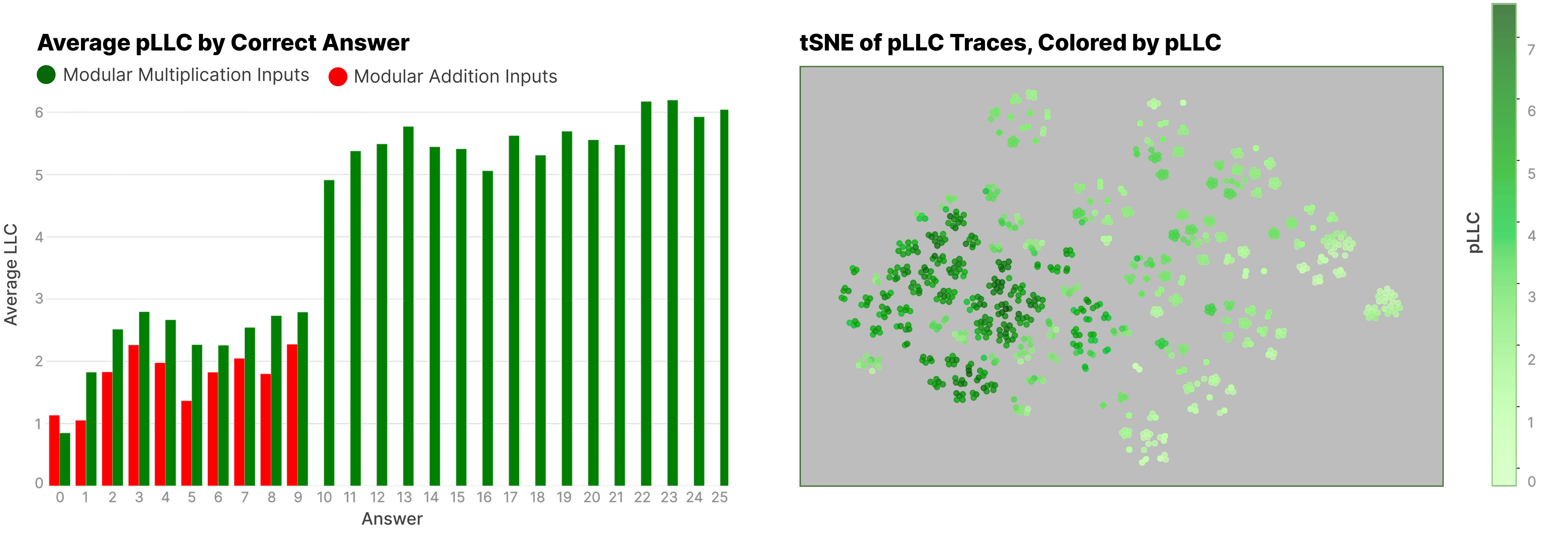

(25:40) Beyond Averages

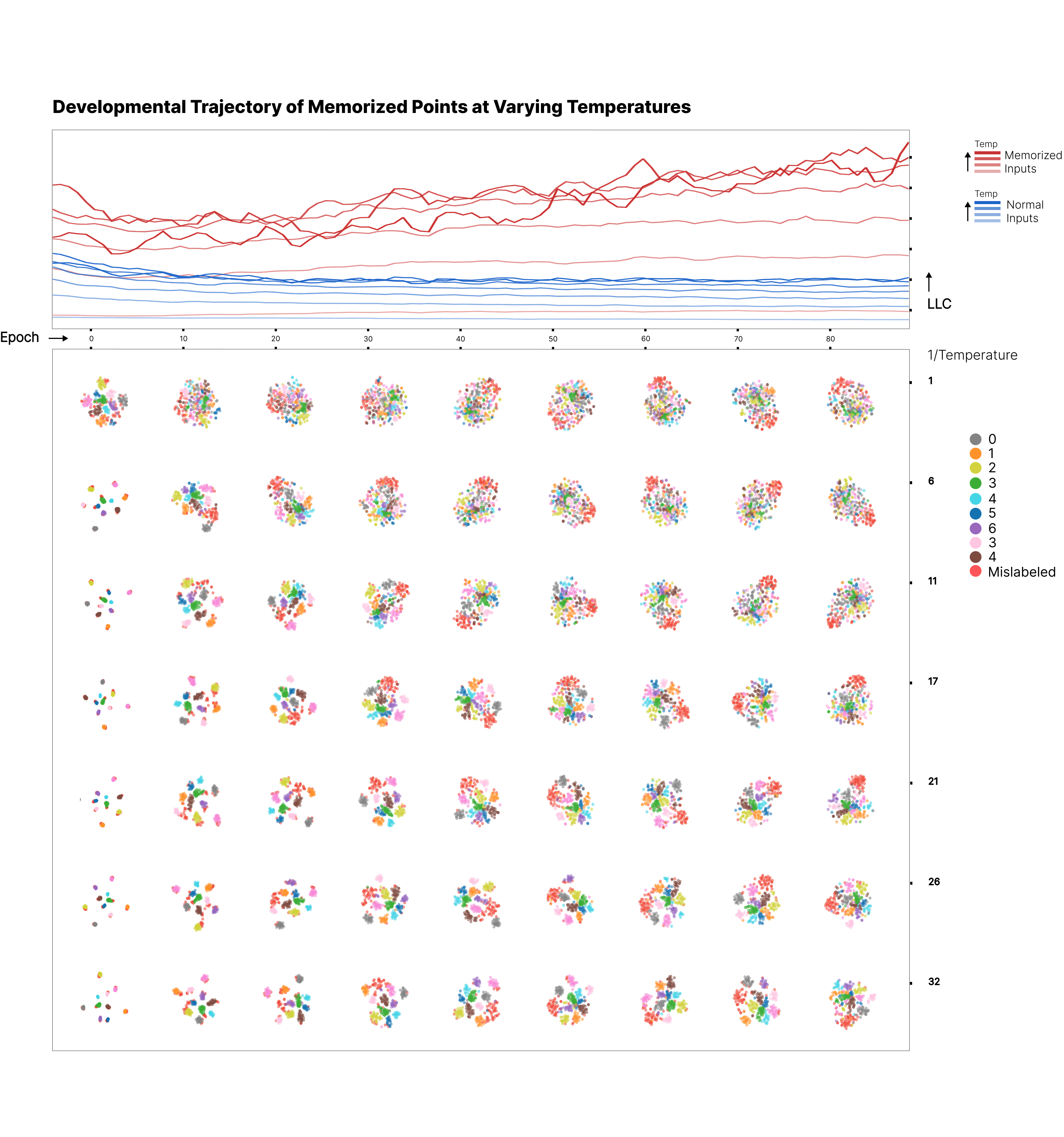

(28:46) A Gradual Noising

(30:46) Interpretable Fragility

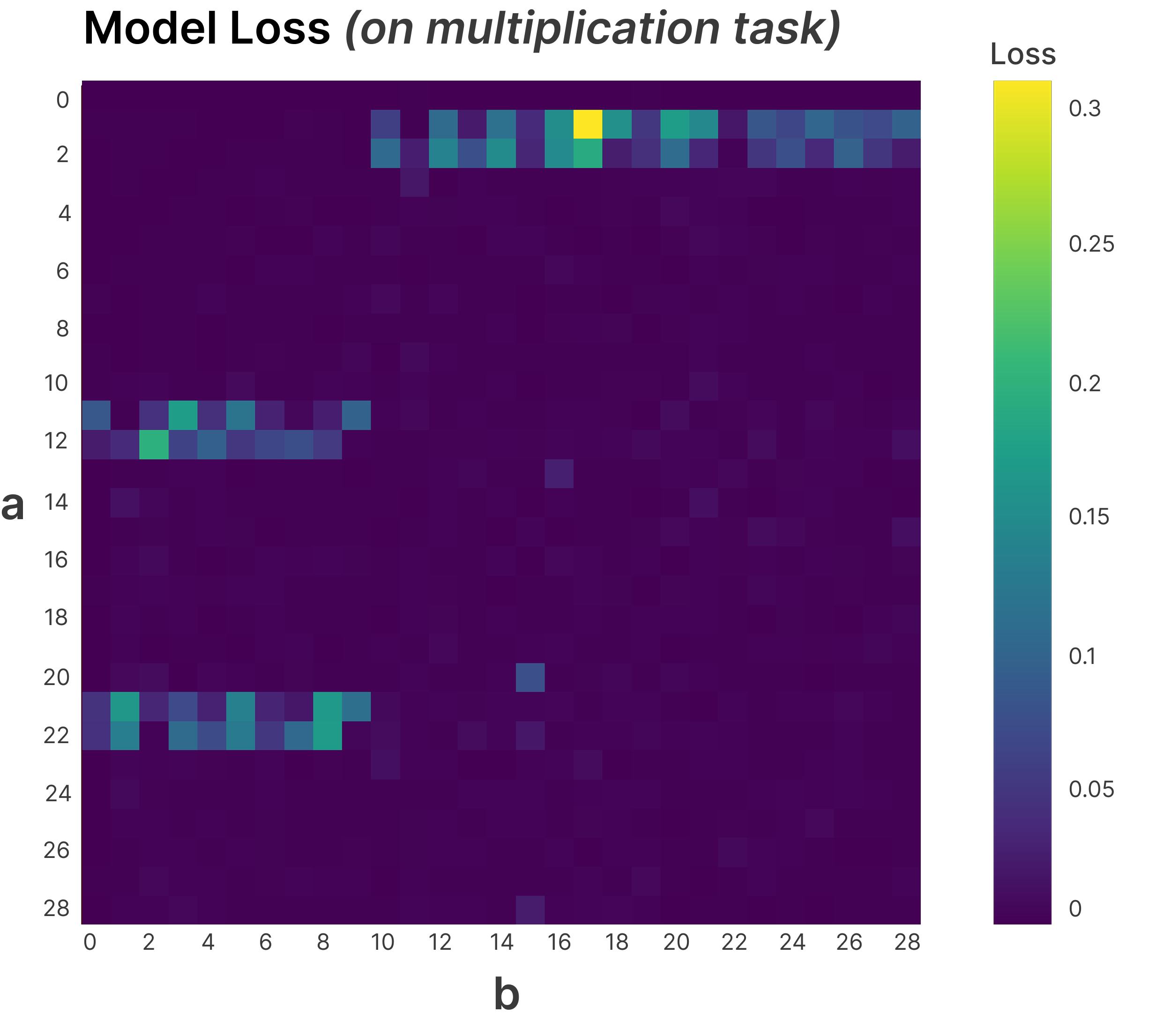

(33:56) Beyond Memorization - Finding More Complex Circuits

(35:00) The Setup

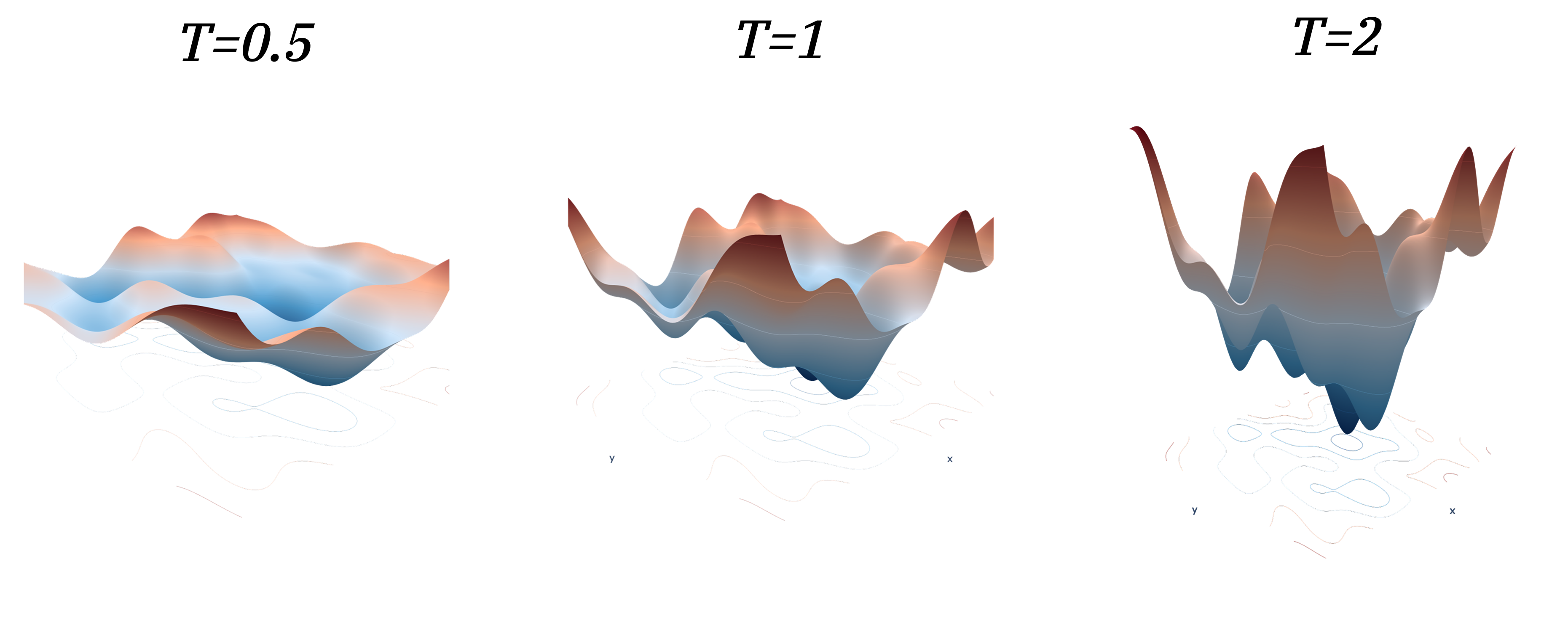

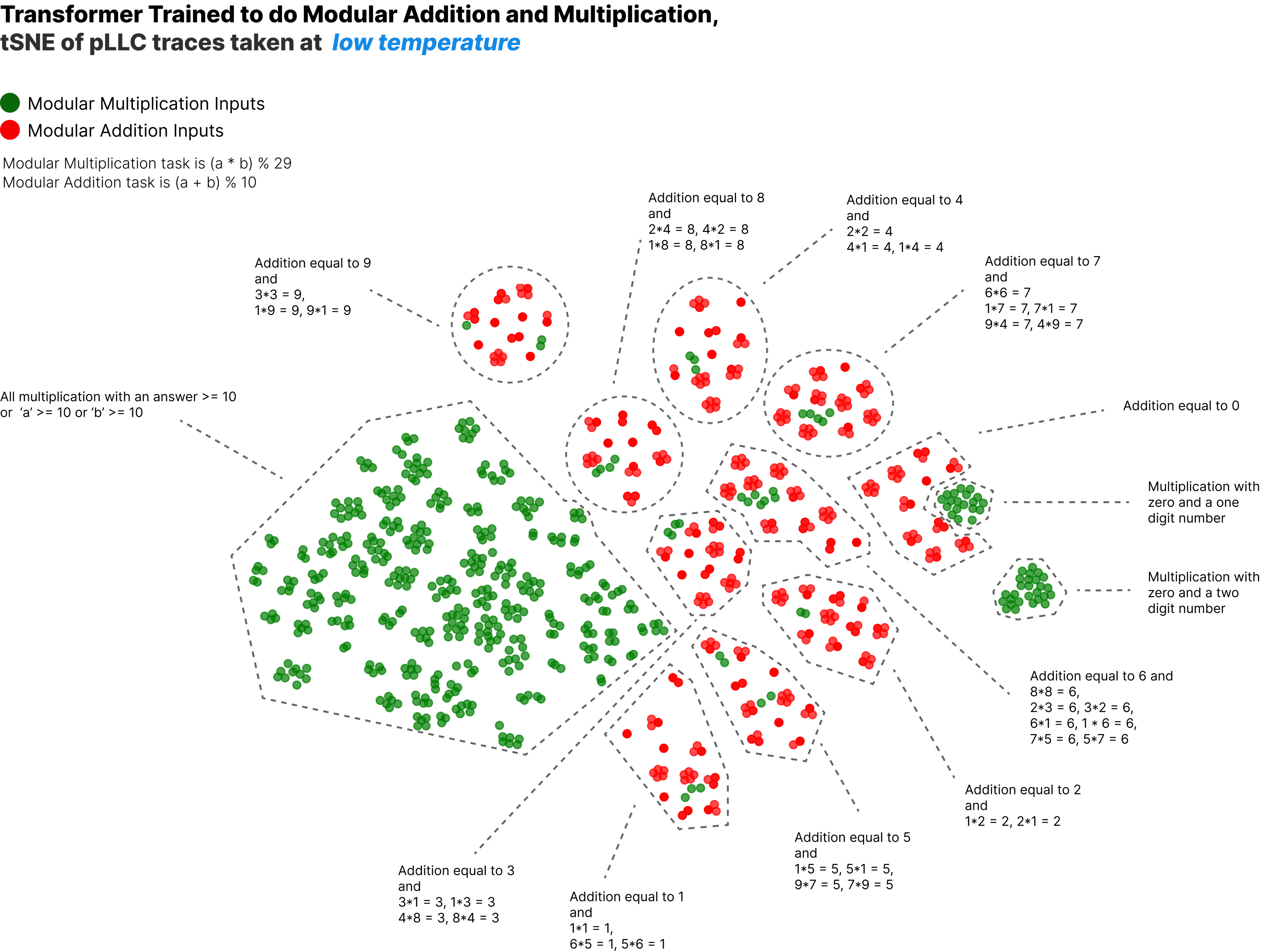

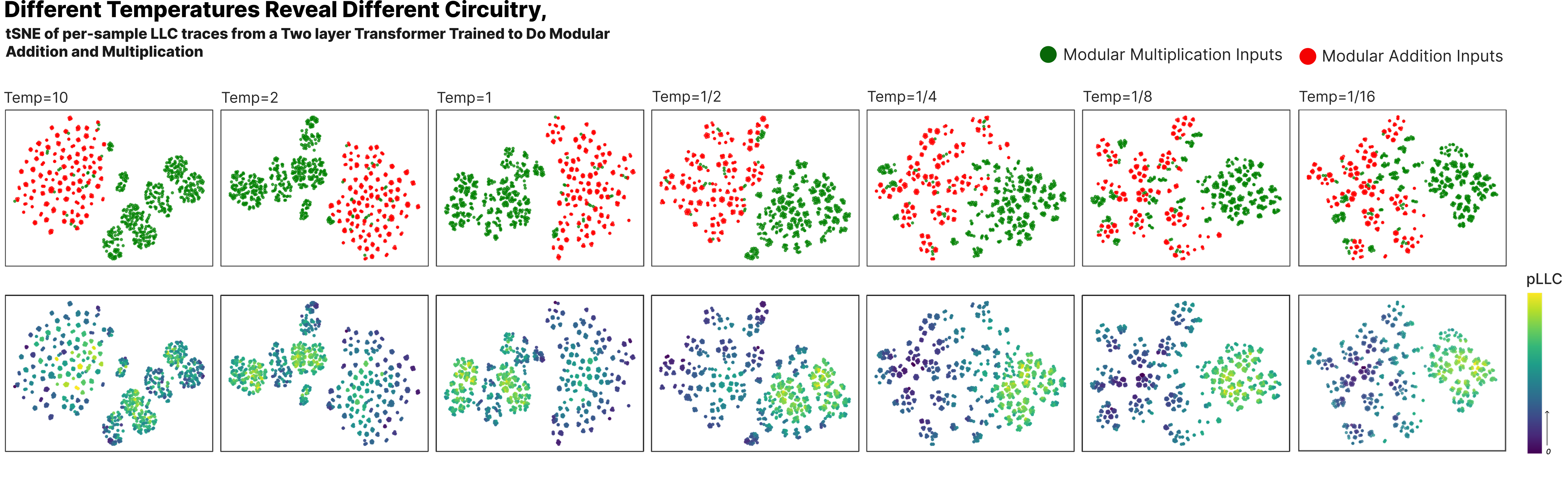

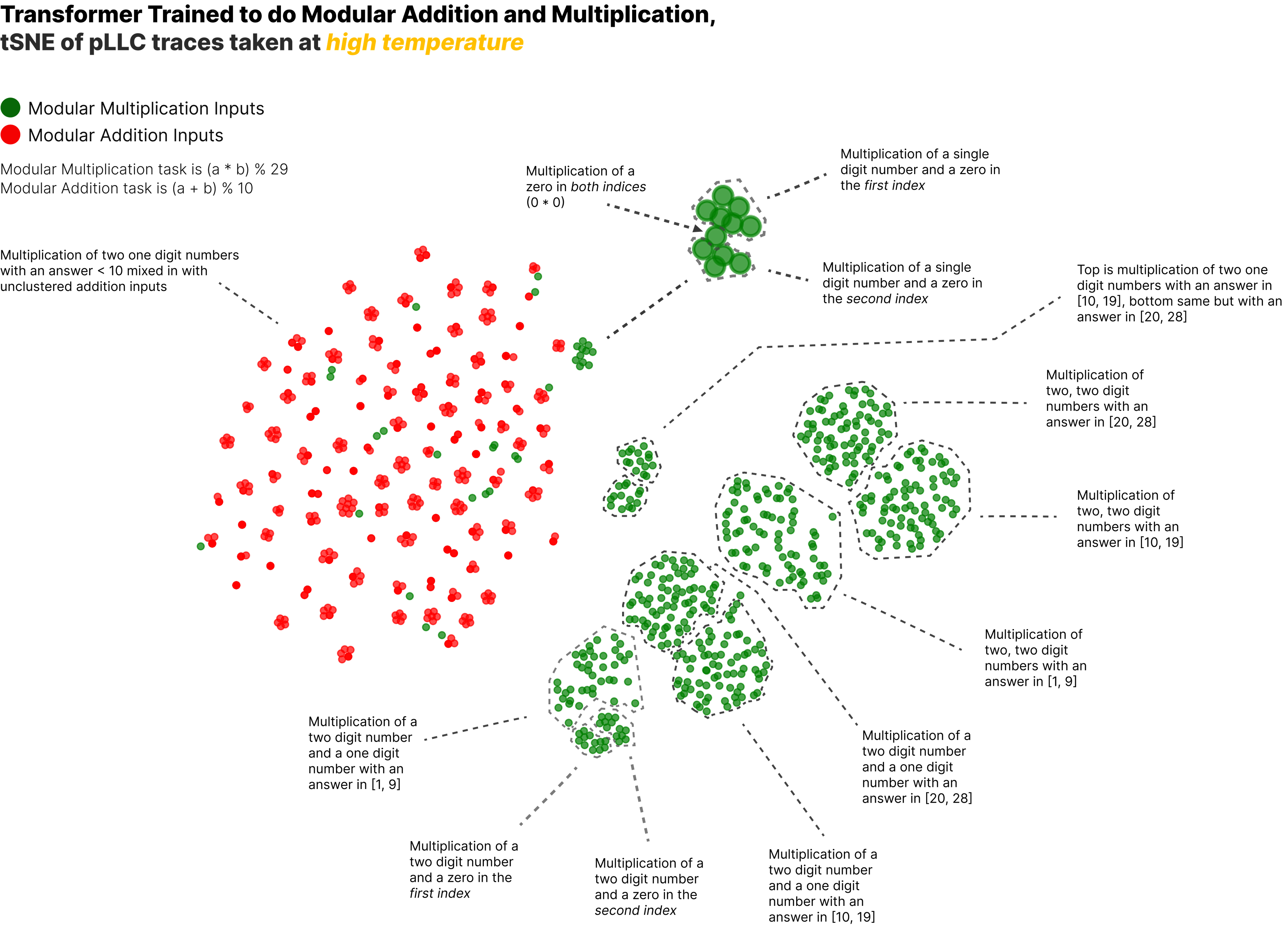

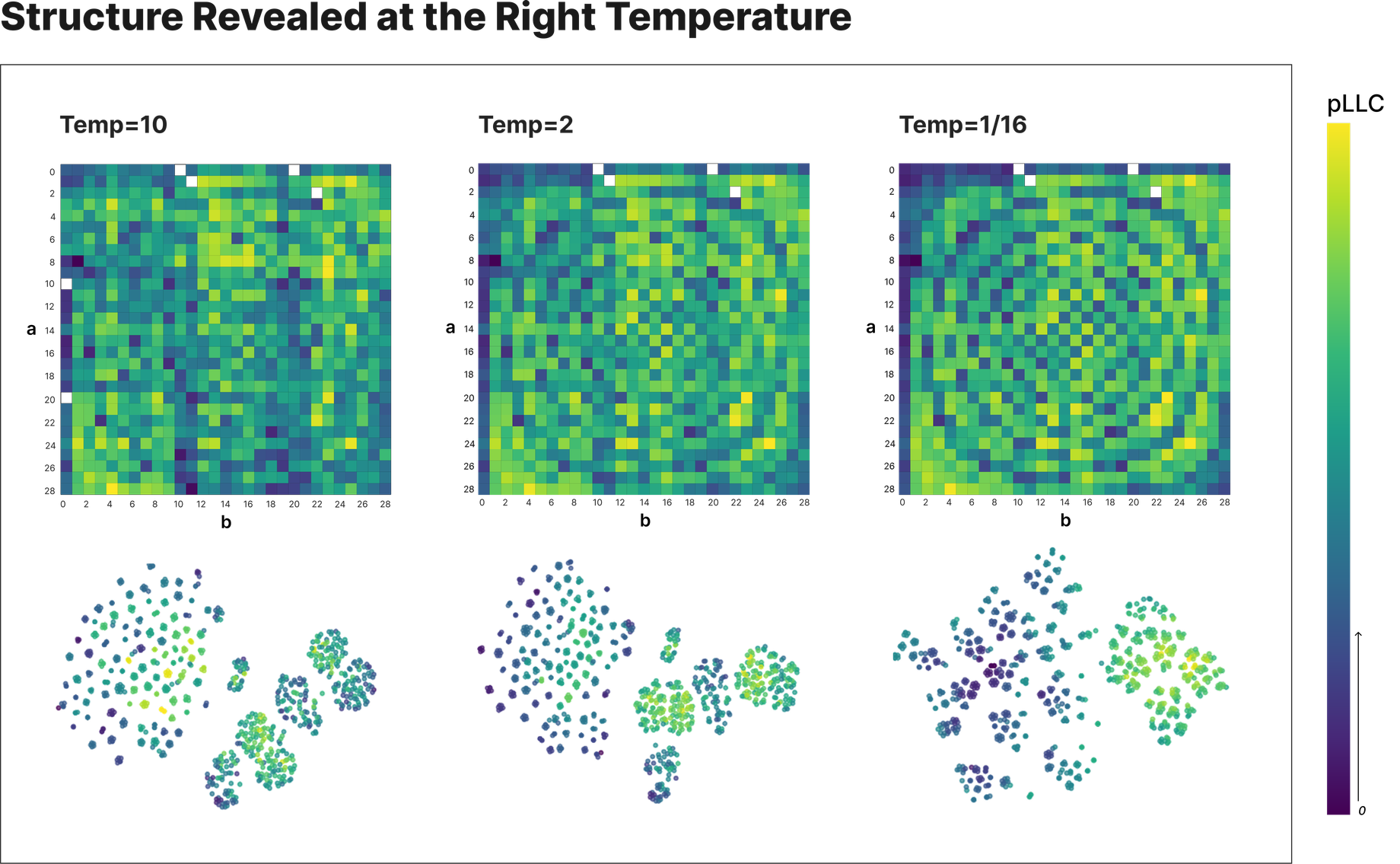

(39:15) Structure Across Temperatures

(42:59) Spirals in the Machine

(45:26) But How? - Temperature

(47:50) Renormalization With Temperature

(49:52) What This Might Mean in Circuit-Land

(51:39) Conclusion

(53:06) Future Work

(55:57) Appendix

(56:00) Scaling Mechanistic Detection of Memorization

(59:03) Unsupervised Detection of Memorized Trojans

(59:58) MNIST, Again

(01:01:24) Notes on Circuit Clustering

(01:02:24) Notes on SGLD Convergence

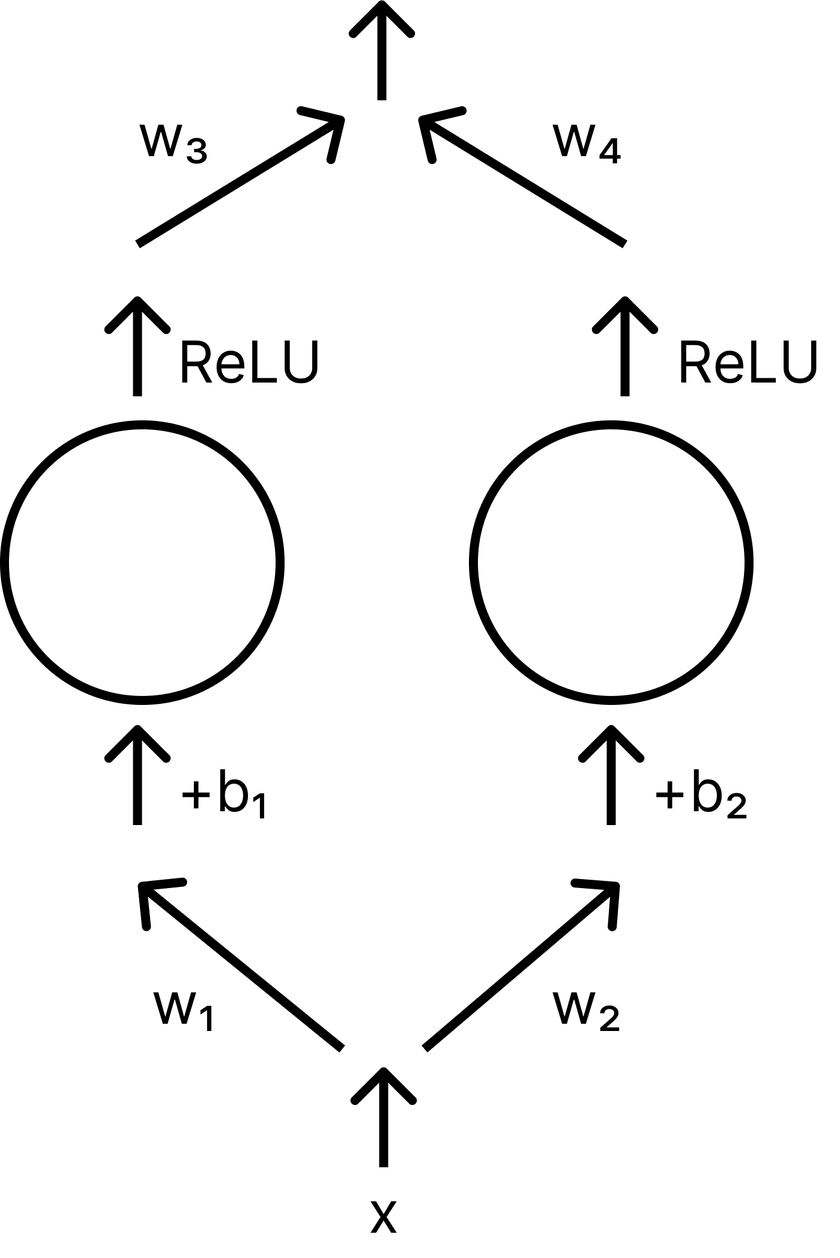

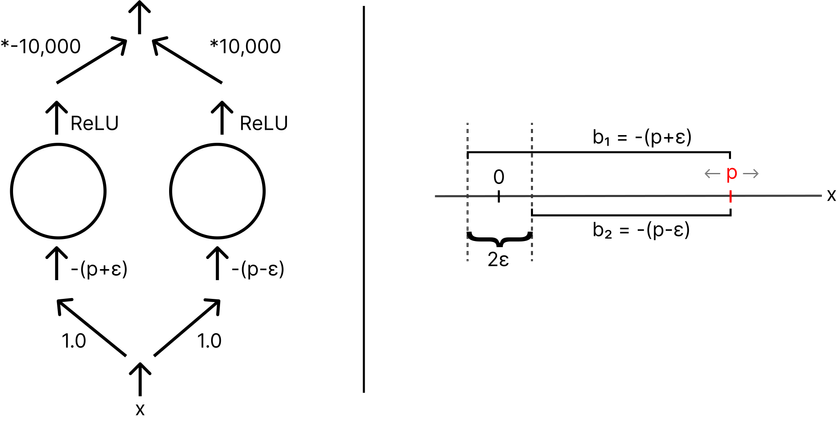

(01:04:00) A Toy Model of Memorization

(01:09:16) What does being singular mean?

The original text contained 4 footnotes which were omitted from this narration.

---

First published:

March 14th, 2025

Source:

https://www.lesswrong.com/posts/eLAmp2pAAvZiBweCB/interpreting-complexity

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.